# A tibble: 6 × 7

SPCompIndexPercentChange CPIPercentChange LongInterestRatePercentChange

<dbl> <dbl> <dbl>

1 0.04 0.00296 0.110

2 0.02 0.00821 0.0366

3 0.02 0.00258 -0.0101

4 0.01 -0.00103 -0.102

5 0.04 0.00181 0.0966

6 -0.01 0.00236 0.192

# ℹ 4 more variables: RealEarningsPercentChange <dbl>,

# CyclicallyAdjPEPercentageChange <dbl>, CAPETotalPercentageChange <dbl>,

# RealTotalBondReturnPercentageChange <dbl>K-means Clustering Using Stocks Data

Michael Wanek, Woodny Dorceans

2023-08-01

Introduction

KMC unsupervised machine learning

Analyze and cluster data-sets that are unlabeled

K-means measures the distance between each objects and centroid and is then assigned to the correct groups until all objects have a group.

K-Means Process

1. Initial stage that partitioned the objects randomly into ‘k’ clusters.

2. The repetition stage by calculating the center of each cluster using the mean of the data, compute the squared Euclidean distance from each object to each cluster, and compute the squared error function.

3. The improvement stage where objects were assigned to the cluster with the nearest center.

4. The stop stage which was a process that continued until no object move clusters or the objective function value doesn’t reduce.

K-Means Cluster

K-Means Limitations

Determination of the number of ‘k clusters’,

Different distance calculation methods

Inability to use all types of data with KMC

Methods

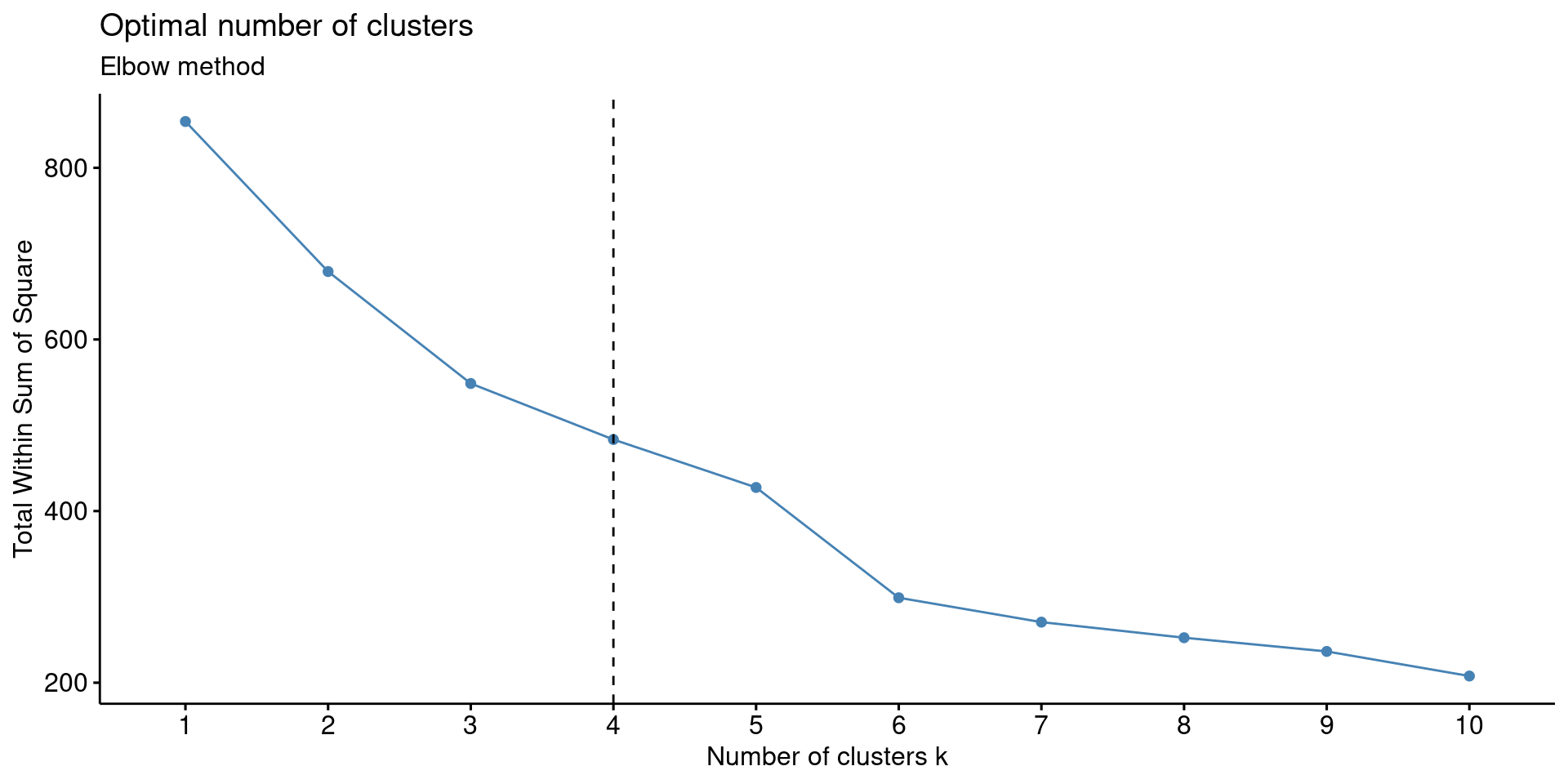

The Elbow Method is used to find a good number of cluster by looking at a point where the sum of squares error (SSE) decreases rapidly. SSE looks at how far each point is from the center of its cluster, essentially the points should be close together to minimize the SSE

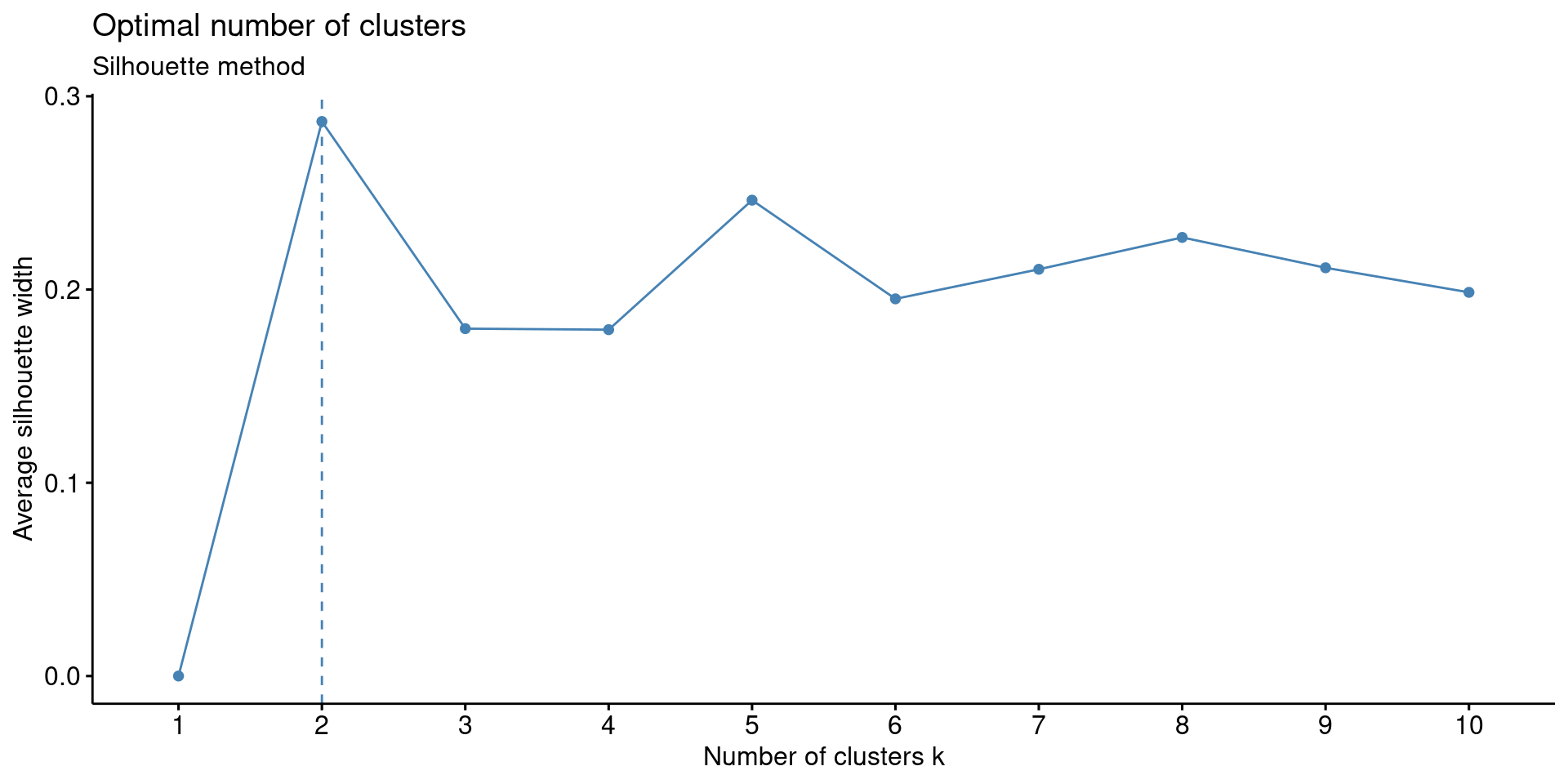

The Silhouette method is used to determine how well data points fits into their cluster. It does so by looking at how close the data point is to its own cluster compared to the other clusters

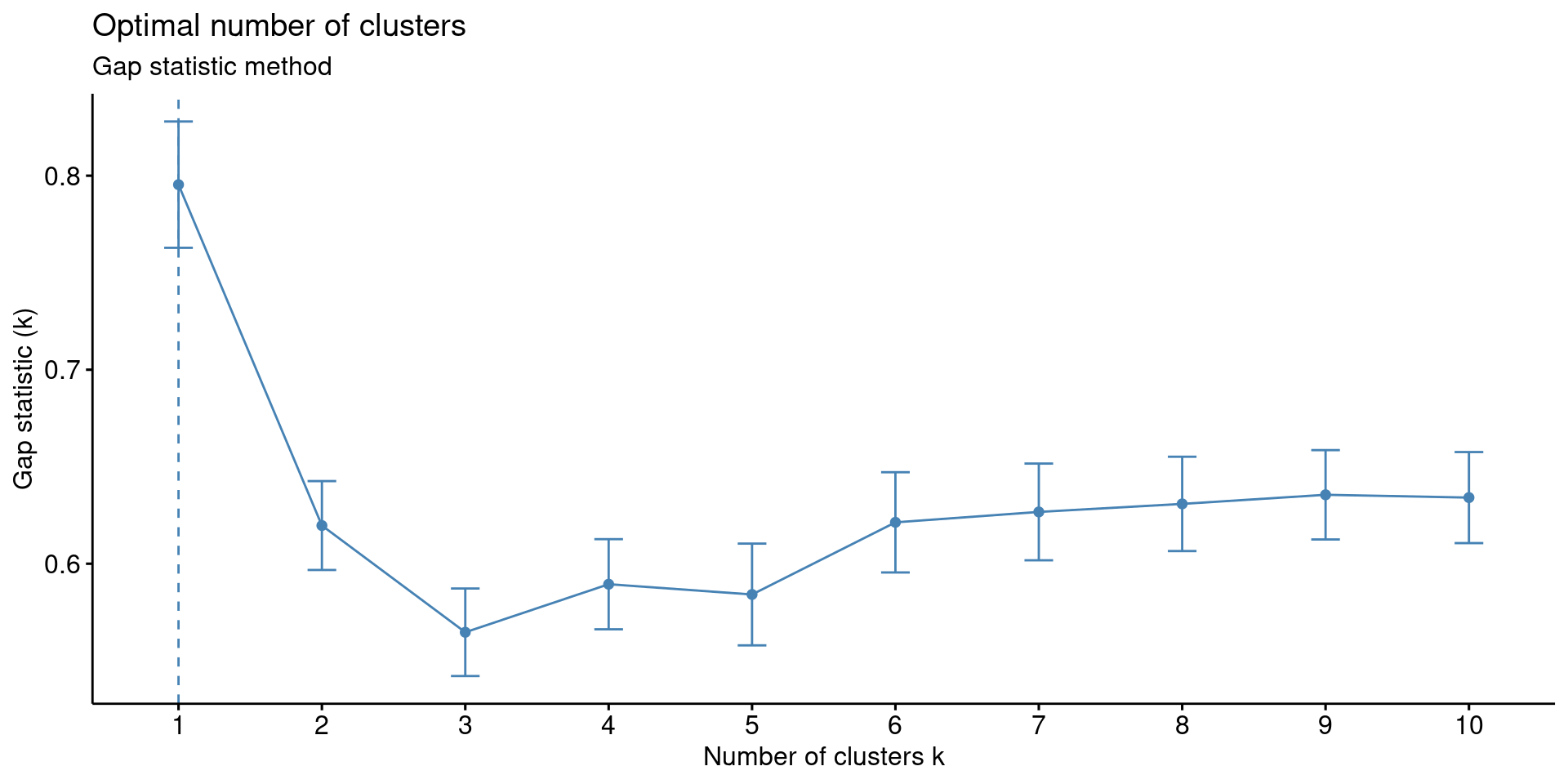

The Gap Statistic method is used to find the k value with the largest gap to help compare the within-cluster dispersion.

Euclidean Distance

Used to calculate the distance between a point and it’s initial cluster.

The Euclidean distance uses the Pythagorean theorem; however, not only in two dimensions, but with as many dimensions as needed.

\[ d_{euc}(x, y) = \sqrt{\sum_{i = 1}^{n}{(x_i - y_i)^2}} \]

Analysis and Results

S&P 500 index dataset

Composite index, consumer price index, long-term interest rates, real earnings, cyclically adjusted price to earnings ratio or CAPE, and real total bond return

Converted to month-by-month percentage changes to make meaningful clusters from 2012 to present

Robert Shiller’s CAPE measures valuation over a ten-year period to smooth out random fluctuations and signals undervalued or overvalued indexes or stocks

\[ \text{Cyclically adjusted price - to - earnings ratio} = \frac{\text{Share Price}}{{\text{(10-year Inflation Adjusted Average Earnings)}}} \]

Data and Visualization

R packages relevant to the project included factoextra(), NbClust(), and Cluster().

Scale() in R was used standardize (normalize) the data for relevant comparison.

| SPCompIndexPercentChange | CPIPercentChange | LongInterestRatePercentChange | RealEarningsPercentChange | CyclicallyAdjPEPercentageChange | CAPETotalPercentageChange | RealTotalBondReturnPercentageChange |

|---|---|---|---|---|---|---|

| 0.8965934 | 0.2026588 | 1.0060604 | -0.0869839 | 0.8407465 | 0.8474031 | -0.9274587 |

| 0.3396187 | 1.6529928 | 0.2579556 | -0.2818001 | 0.1198802 | 0.1423810 | -0.6077692 |

| 0.3396187 | 0.0983269 | -0.2158457 | -0.0736157 | 0.4154007 | 0.4217869 | 0.1363896 |

| 0.0611314 | -0.9012484 | -1.1476315 | 0.3626172 | 0.1549720 | 0.1611393 | 1.2431075 |

| 0.8965934 | -0.1167998 | 0.8654489 | 0.2482291 | 0.9826116 | 0.9936749 | -0.7642882 |

| -0.4958433 | 0.0365916 | 1.8294544 | 0.2183380 | -0.6941067 | -0.6890358 | -1.7469219 |

Data and Visualization (Methods)

- Elbow Method to determine the optimal number of clusters using fviz_nbclust().

- Silhouette Method to determine the optimum number of clusters; it measures how well a data point fits within a cluster using the Silhouette Coefficient.

- Gap Statistic Method to identify the optimum number of clusters using a logarithmic function.

- NbClust() uses 30 different methods determine the optimal number of clusters using the “Euclidean” algorithm (Pythagorean Theorem) to find the relative distances between the data points.

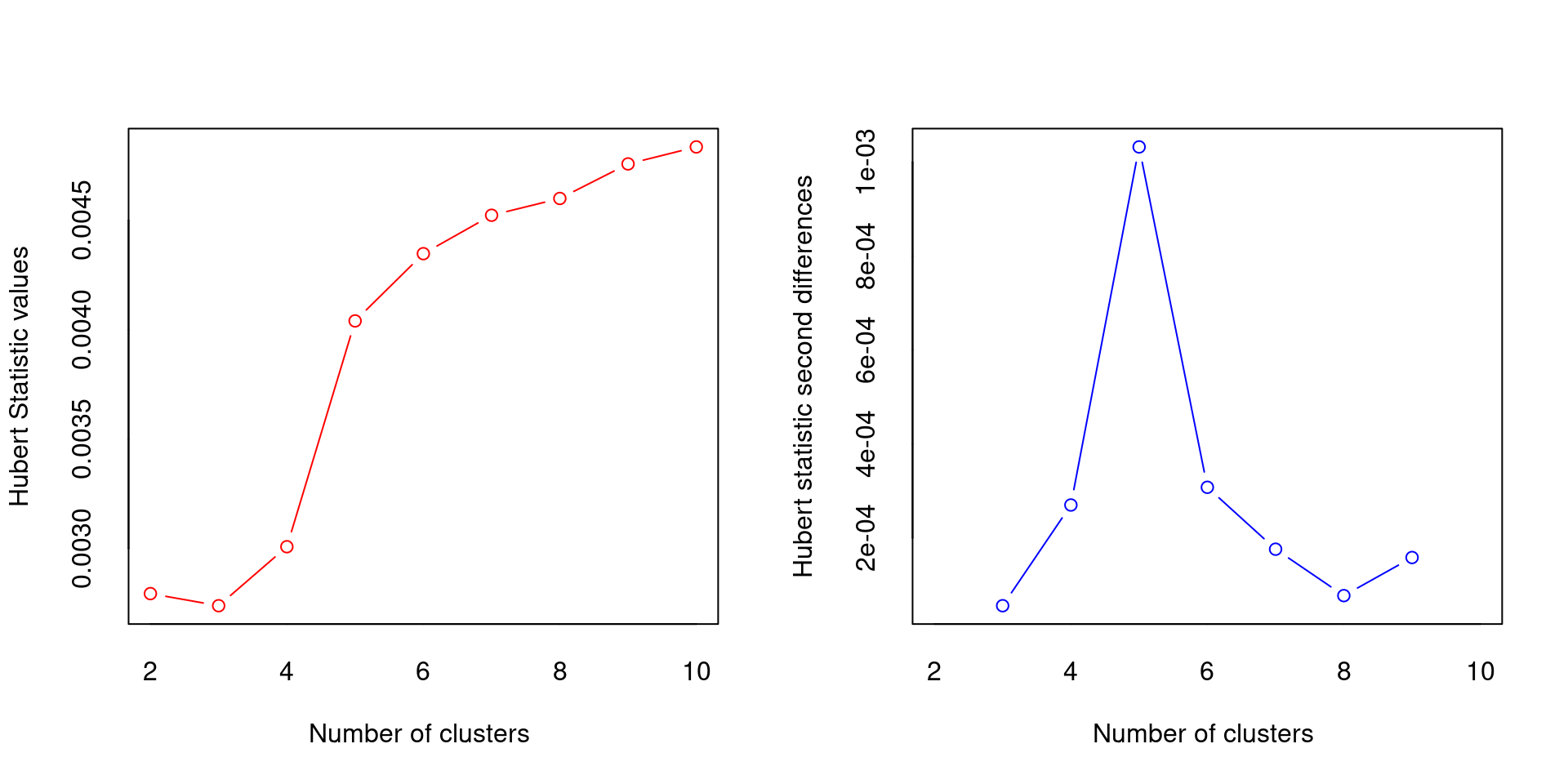

*** : The Hubert index is a graphical method of determining the number of clusters.

In the plot of Hubert index, we seek a significant knee that corresponds to a

significant increase of the value of the measure i.e the significant peak in Hubert

index second differences plot.

*** : The D index is a graphical method of determining the number of clusters.

In the plot of D index, we seek a significant knee (the significant peak in Dindex

second differences plot) that corresponds to a significant increase of the value of

the measure.

*******************************************************************

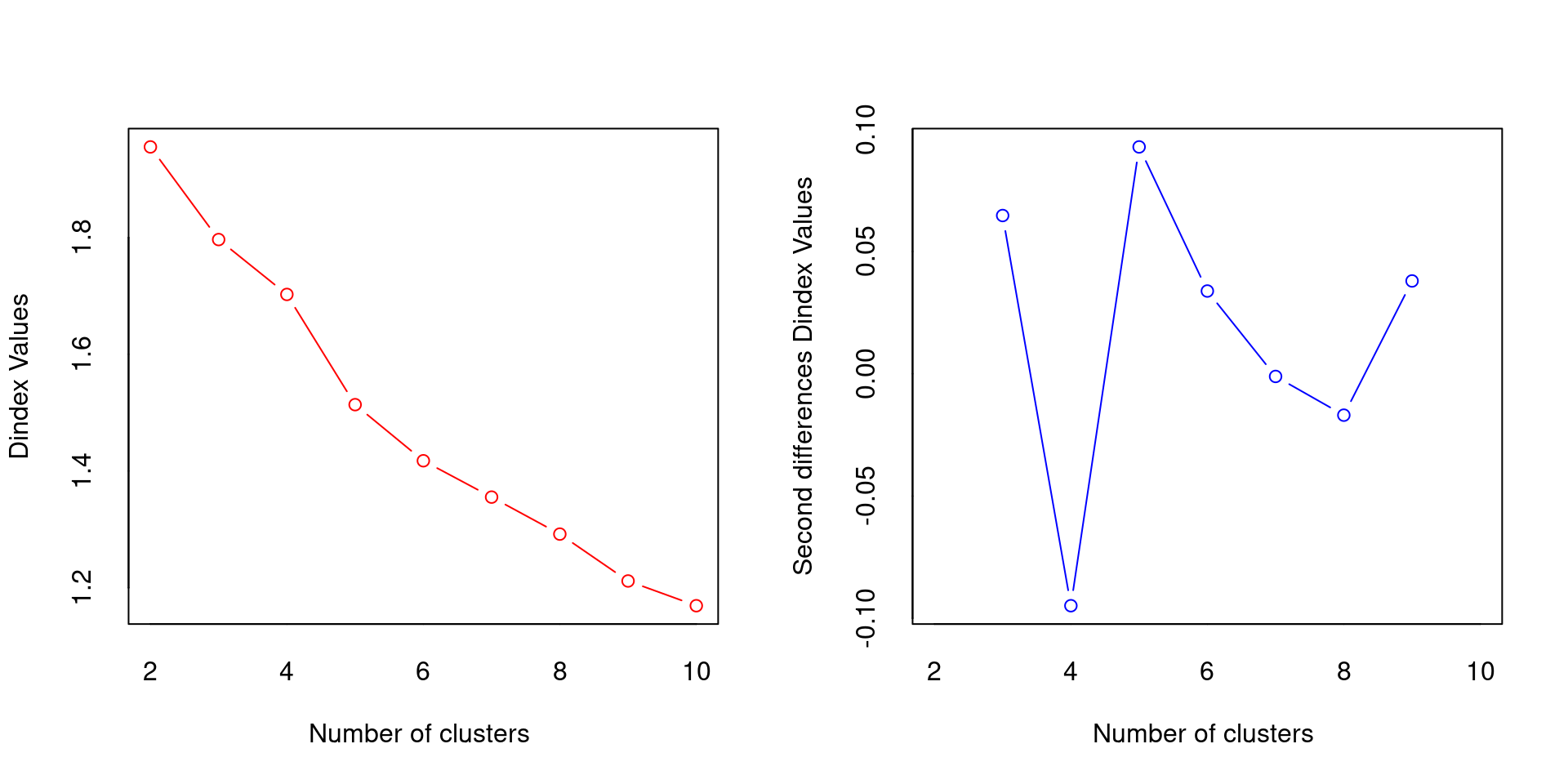

* Among all indices:

* 6 proposed 2 as the best number of clusters

* 2 proposed 3 as the best number of clusters

* 3 proposed 4 as the best number of clusters

* 6 proposed 5 as the best number of clusters

* 2 proposed 6 as the best number of clusters

* 1 proposed 7 as the best number of clusters

* 1 proposed 9 as the best number of clusters

* 3 proposed 10 as the best number of clusters

***** Conclusion *****

* According to the majority rule, the best number of clusters is 2

******************************************************************* Data & Visualization

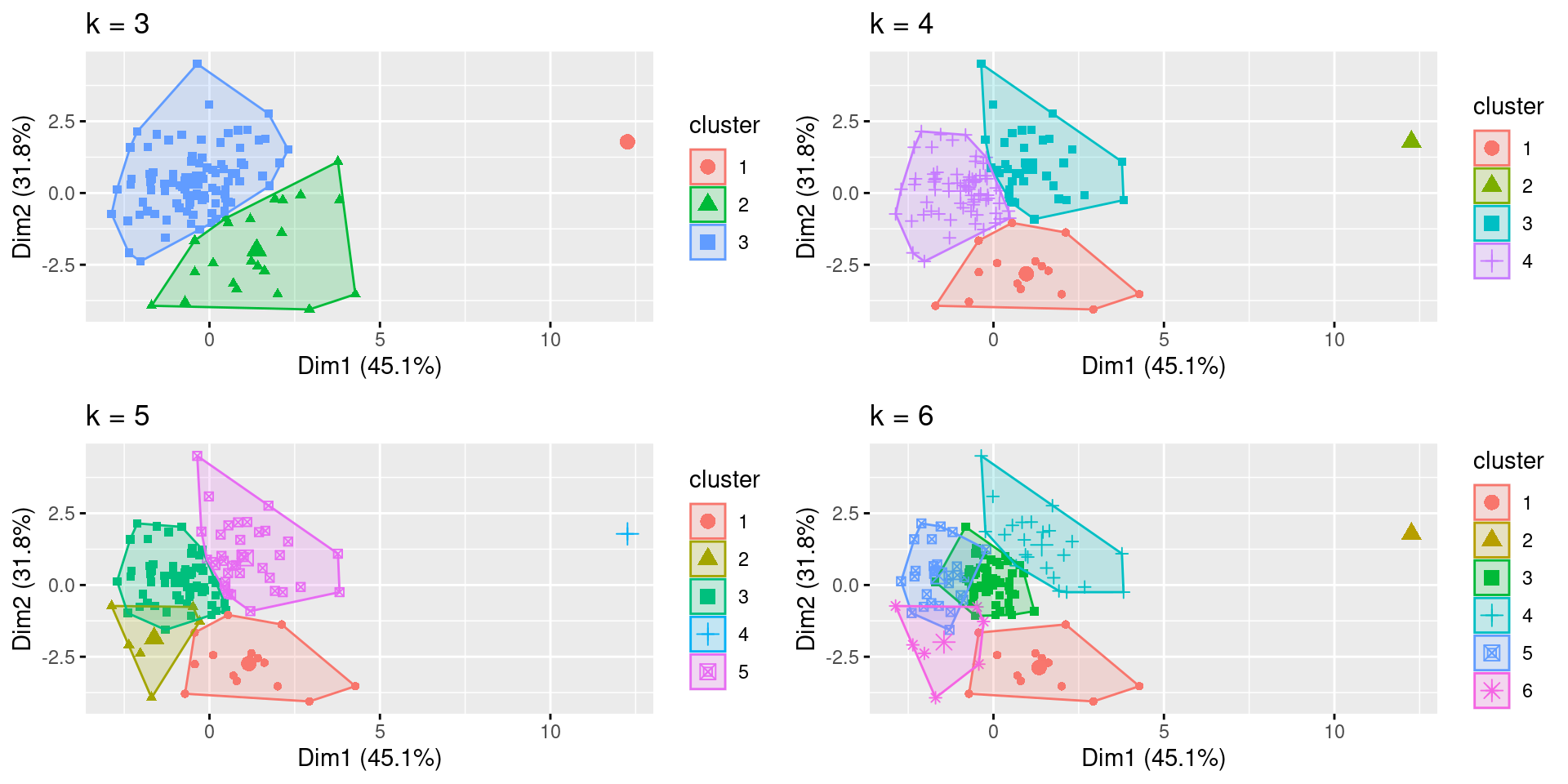

- The coded algorithm fviz_cluster() plots the clusters using the selected number of centroids: k=3 through k=6.

Data & Visualization

K-means clustering with 4 clusters of sizes 69, 1, 38, 15

Cluster means:

SPCompIndexPercentChange CPIPercentChange LongInterestRatePercentChange

1 0.5091327 0.04409966 0.1741670

2 -6.9010521 -1.21471372 -4.3700644

3 -0.3859141 -0.53345867 -0.7321102

4 -0.9042914 1.22955111 1.3448485

RealEarningsPercentChange CyclicallyAdjPEPercentageChange

1 0.1963760 0.5410223

2 -2.4270359 -5.8070446

3 -0.2155105 -0.3902915

4 -0.1955674 -1.1128277

CAPETotalPercentageChange RealTotalBondReturnPercentageChange

1 0.5399368 -0.1757434

2 -5.7976262 3.5727109

3 -0.3852076 0.8583392

4 -1.1213416 -1.6042206

Clustering vector:

[1] 1 1 1 3 1 4 1 1 1 3 1 1 1 3 1 3 3 1 3 3 1 3 1 3 3 1 1 3 4 4 3 3 3 1 1 3 3

[38] 3 1 1 3 3 1 1 1 1 1 1 1 1 1 3 1 1 1 3 1 1 1 1 1 4 3 3 1 1 1 1 1 4 3 3 3 1

[75] 1 1 3 3 3 3 1 3 1 1 1 3 2 3 1 1 1 1 3 1 1 1 1 1 4 1 1 1 1 1 1 4 1 3 4 4 4

[112] 4 4 4 3 1 4 4 1 3 1 1 3

Within cluster sum of squares by cluster:

[1] 225.55854 0.00000 115.11444 77.20815

(between_SS / total_SS = 51.1 %)

Available components:

[1] "cluster" "centers" "totss" "withinss" "tot.withinss"

[6] "betweenss" "size" "iter" "ifault" Based on the analysis of the output data and visualizations, k=4 was selected

Although one cluster had only “1” data point, it was not eliminated as an outlier since stock market traders are faced with extreme events in the stock market, and these shocks should not be ignored considering significant shifts can severely affect portfolios.

Statistical Modeling

Moderate increase of S&P 500 with cluster mean ≈ 0.51

Slight increase in CPI ≈ 0.04 (a metric to reflect inflation)

Small increase in long interest rate ≈ 0.17 (Federal Reserve responds to increasing inflation)

Small increase in real earnings ≈ 0.20

Significant increase in CAPE ≈ 0.54 (a positive predictor for market valuation increase)

Small decrease in total bond return ≈ -0.18 (investors often move assets out of fixed income into stocks)

Only contained one data point showing a nearly 7 standard deviation decrease from the mean indicating a market crash

Multi standard deviation decrease in:

Consumer price index (deflation)

Long interest rates (the Federal Reserve rapidly cutting interest rates)

Real earnings and CAPE

Total bond returns significantly increased (consistent with traders moving money out of a crashing stock market into the safe haven of bonds)

Significant decline in the stock market ≈ -0.90

Significant increase in consumer price index ≈ 1.23 (inflation)

Significant long interest rate increase ≈ 1.34

Small decline in real earnings ≈ -0.20

Significant decline in CAPE ≈ -1.12

Significant decline in total bond return of ≈ -1.60

Conclusion

K-means provided correlating metrics that a trader could potentially use to reap better gains when investing in the S&P 500

Traders could use K-means as a signal to buy and sell the S&P 500

Monitor long interest rate, consumer price index, earnings, bond returns, and CAPE ratios to optimize a portfolio

Combining K-means with other statistical tools, like regression analysis, could serve as beneficial predictors of the market

The statistician must be judicious when determining the number of clusters